Regulation Radar: What Four LLMs Made of 890 EU Laws

March 2026

Quick Brief

- Experiment: Four open-source LLMs read 890 EU regulations and classified them by thematic domain and regulatory impact – one on a MacBook Air, the rest on rented H100s in Paris, no external API involved. Where possible, results were compared against EuroVoc, the EU’s own classification system.

- Why it matters: The fact that LLMs can read regulation was never really in question. What actually matters is whether their output is consistent enough to act on – and what happens when you check it against human judgement.

- Key finding: A 70B reasoning model that pauses to think before answering outperformed a 141B legal specialist trained specifically on law. On most regulations, the models agreed on the core subject – but not one was classified identically by all four. When they did agree, accuracy against the human baseline went up. When they didn’t, a human in the loop becomes advisable.

Context

The neat thing about bureaucracies is that they produce vast amounts of structured text. Which, in theory, should make them ground zero for language models.

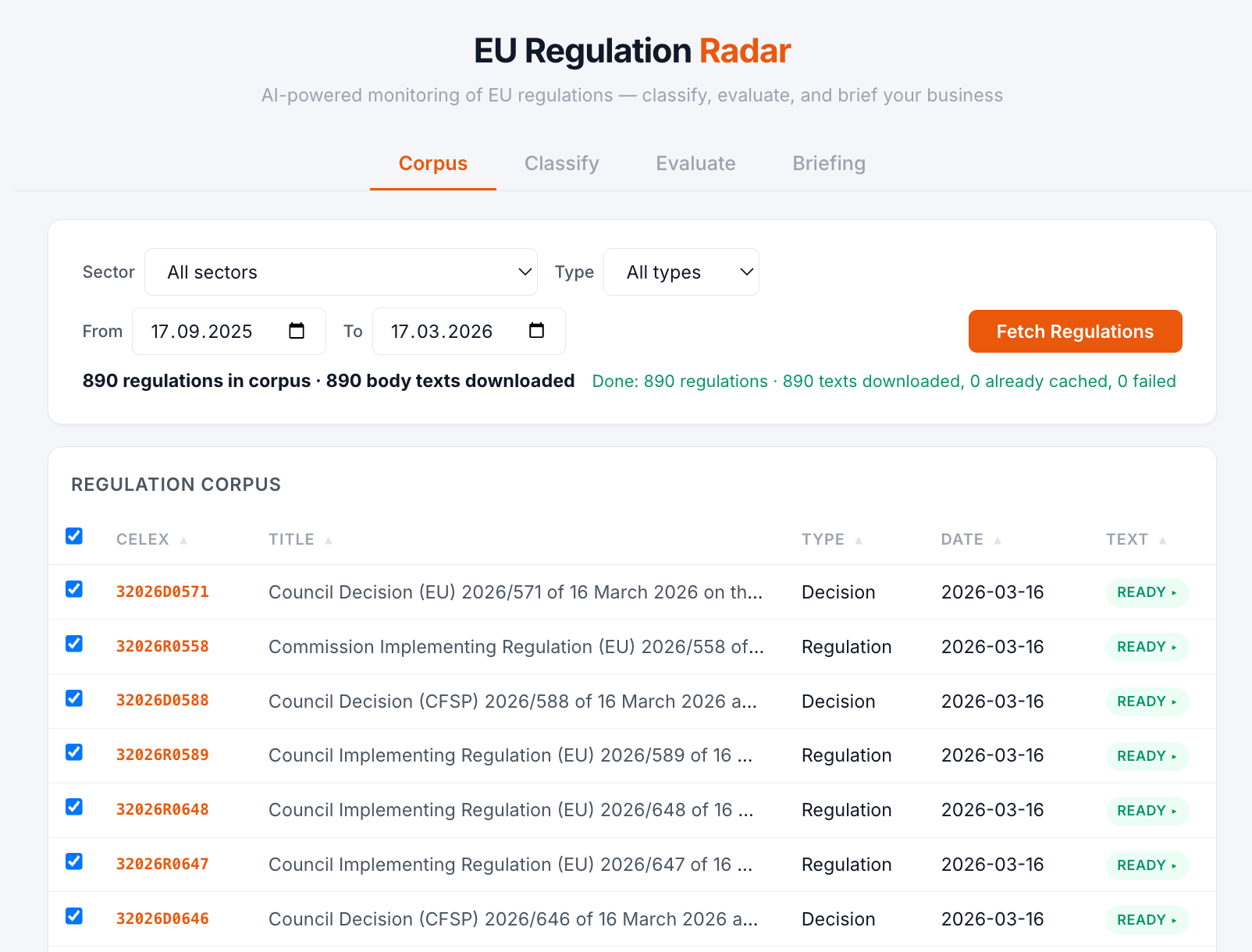

In the six months to March 2026 (17 September to 17 March, to be precise), the European Union published 890 pieces of binding legislation. Over five million words of regulations, decisions, and directives – roughly ten times the length of War and Peace, published in half a year.

Somebody has to read all of that.

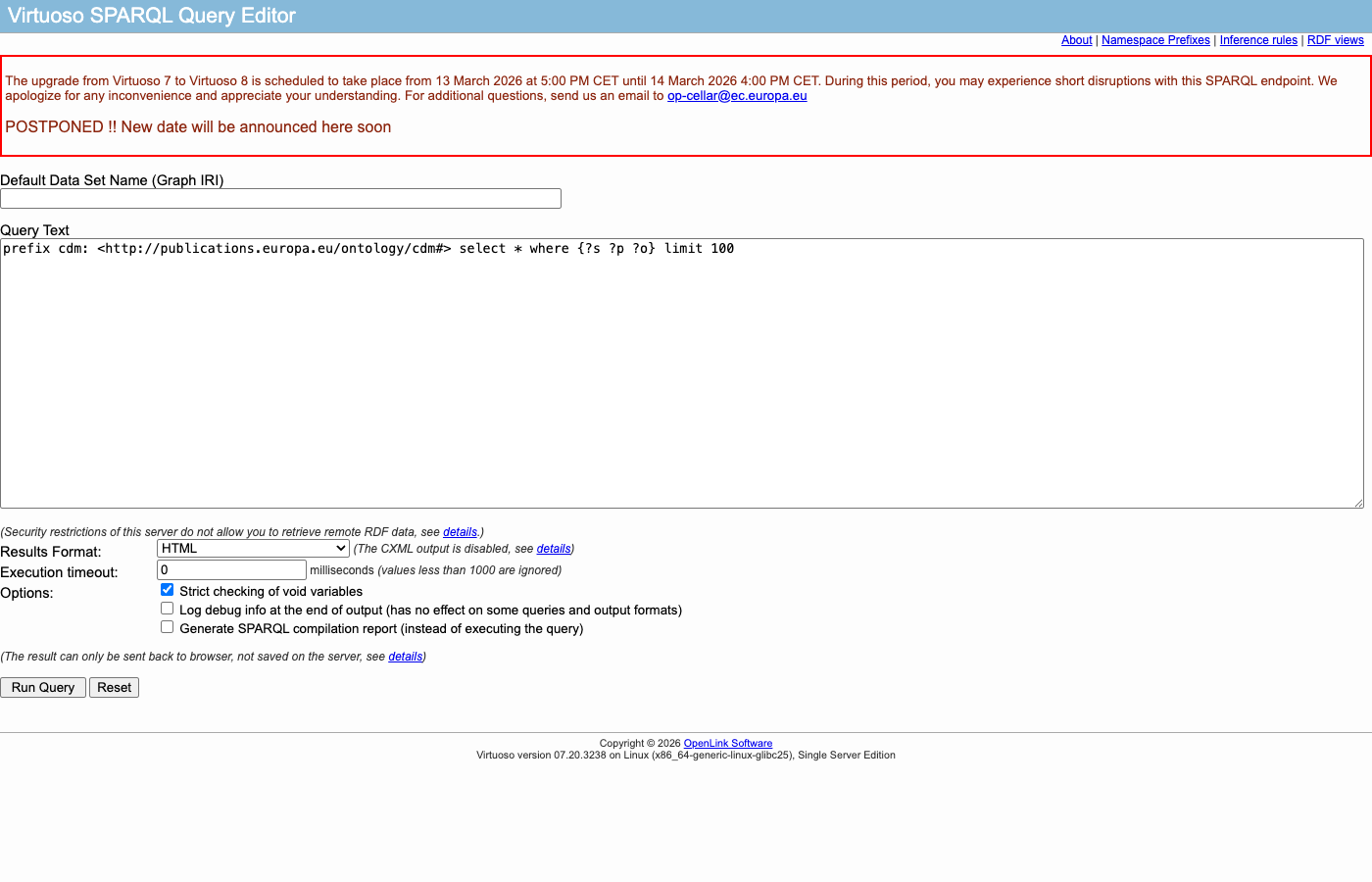

All EU legislation is publicly available through EUR-Lex – well-known to EU legal practitioners and arguably invisible to everyone else. A SPARQL endpoint lets you query legislation like a database. A bulk download API called CELLAR delivers full texts programmatically, in all 24 official EU languages, with structured metadata attached. A very well-organised government filing cabinet with an API.

The filing cabinet. With an API.

The filing cabinet. With an API.

But a filing cabinet is only useful if you know what is in it. Therefore, the EU doesn’t just publish legislation – it tags it. Every regulation gets classified using EuroVoc, a controlled vocabulary of roughly 7,000 terms organised into 21 top-level thematic domains – “Agriculture”, “Trade”, “Finance”, “Energy”, and so on. The tagging is done by professional librarians at the Publications Office, and has been running since 1995.

A previous experiment asked six models to classify ambiguous legal documents. They disagreed extensively – but there was no ground truth to grade against. This time, the librarians provided one, sort of – they tag each regulation with specific terms from EuroVoc’s ~7,000 concept vocabulary, and those terms sit within a fixed hierarchy that maps them to the 21 top-level domains. The models were asked to pick domains directly. So the comparison is not quite apples to apples, but it is structured, consistent, and publicly available, which is considerably better than no benchmark at all.

The conventional approach to regulatory monitoring is to give the documents to a compliance officer, who reads them, asks ChatGPT which parts matter, and writes a summary (also with ChatGPT). The unconventional approach is to notice that the filing cabinet already has an API – and that language models can read. You do not put a steam engine on a horse. You build a railway.

The Railway

Before any model reads anything, the data needs to arrive. The pipeline works in stages.

Stage 1: Fetch. A SPARQL query hits the EUR-Lex endpoint and retrieves all secondary legislation published in the last six months – 890 documents. For each: the CELEX identifier, title, date, document type, and the EuroVoc descriptors assigned by the human librarians. This is the ground truth we will grade against.

Stage 2: Download. The CELLAR API delivers the full text of each regulation. For our classification, the full text is overkill – a 400,000-word implementing regulation about carbon border adjustments does not need to be read in its entirety to know it belongs under “Energy” and “Trade”. The pipeline extracts the preamble: title, recitals, and the opening articles. Typically 1,000–3,000 tokens – enough to understand what the regulation does, without drowning the model in annexes.

Stage 3: Classify. Each regulation gets two prompts. The first asks the model to assign the document to one or more of the 21 EuroVoc domains – the thematic “what is this about?” question. The second asks for an impact assessment: is this ROUTINE (an administrative amendment nobody outside a specific agency will notice), MODERATE (new requirements for a specific sector), or HIGH (significant new obligations across multiple sectors, major compliance deadlines – on the scale of an AI Act or GDPR)?

Stage 4: Route. Regulations classified as HIGH impact get routed to a separate model for a detailed editorial briefing – what it does, who is affected, what the deadlines are, what the worst case looks like. Ideally, the full document goes in here, not just the preamble. This can run on the same infrastructure or a different machine entirely, depending on the model and the size of the legislation.

From SPARQL query to classified regulation. Swap the model, keep everything else. Click to enlarge.

In this experiment, the routing goes to Claude Sonnet via the Anthropic API. At this point we would typically publish the model and hardware specs with utmost transparency – unfortunately, Anthropic does not publish theirs. This is also the one step where data leaves the organisation’s infrastructure, though it does not have to: the briefing model can run internally if the use case demands it.

The open-source models classified between 1 and 30 regulations as HIGH out of 890 – roughly 1–3% of the corpus. Only those get sent to the expensive model. The other 97–99% never leave the building, which is where most of the cost savings come from.

The experiment: run this pipeline with four different models at the classification stage. Same 890 regulations, same two prompts, same temperature (0.1), same routing logic. Then compare – with the humans, and with each other.

For those who would rather skip the technical details and see what four language models think your business should be worried about: all 890 regulations, classified and briefed, are browsable here.

The Off-the-Shelf Option

Imagine this: a regulation gets published, and before you have finished your coffee, the pipeline has picked it up, classified it, assessed its impact, and produced a briefing – all on a laptop, no cloud costs, no data leaving the building.

That requires a model that actually fits on a laptop. An Apple MacBook Air with 16 GB of unified memory can realistically run models up to about 7 billion parameters in quantised form, which rules out anything above the small end of the model spectrum. But small is still something.

Since we are dealing with legal text, the choice fell on an old acquaintance: SaulLM-7B, a legal-domain model fine-tuned on court rulings and legislative text. It is the smaller sibling of the 54B model from the previous experiment – the one that needed an H100 to run. This one fits on a laptop. Ollama serves the model as a local API, using the M3’s integrated GPU via Metal for inference.

890 regulations, downloaded and waiting. Each one gets two prompts – domain and impact.

890 regulations, downloaded and waiting. Each one gets two prompts – domain and impact.

890 regulations, two prompts each, fed through a 7B model on a laptop. It took 15 hours and 41 minutes to get through all 1,780 prompts – roughly one minute per regulation to read the preamble, classify by domain, assess impact, and return a structured JSON response. You start it before bed, and by morning the laptop has opinions about 890 pieces of EU legislation.

Turns out, just because a MacBook Air can run a language model on EU legislation overnight does not mean it should.

Classifying hundreds of regulations at once – the actual use case for a regulatory monitoring system – requires something substantially more powerful than what fits in a laptop.

”More Power!”

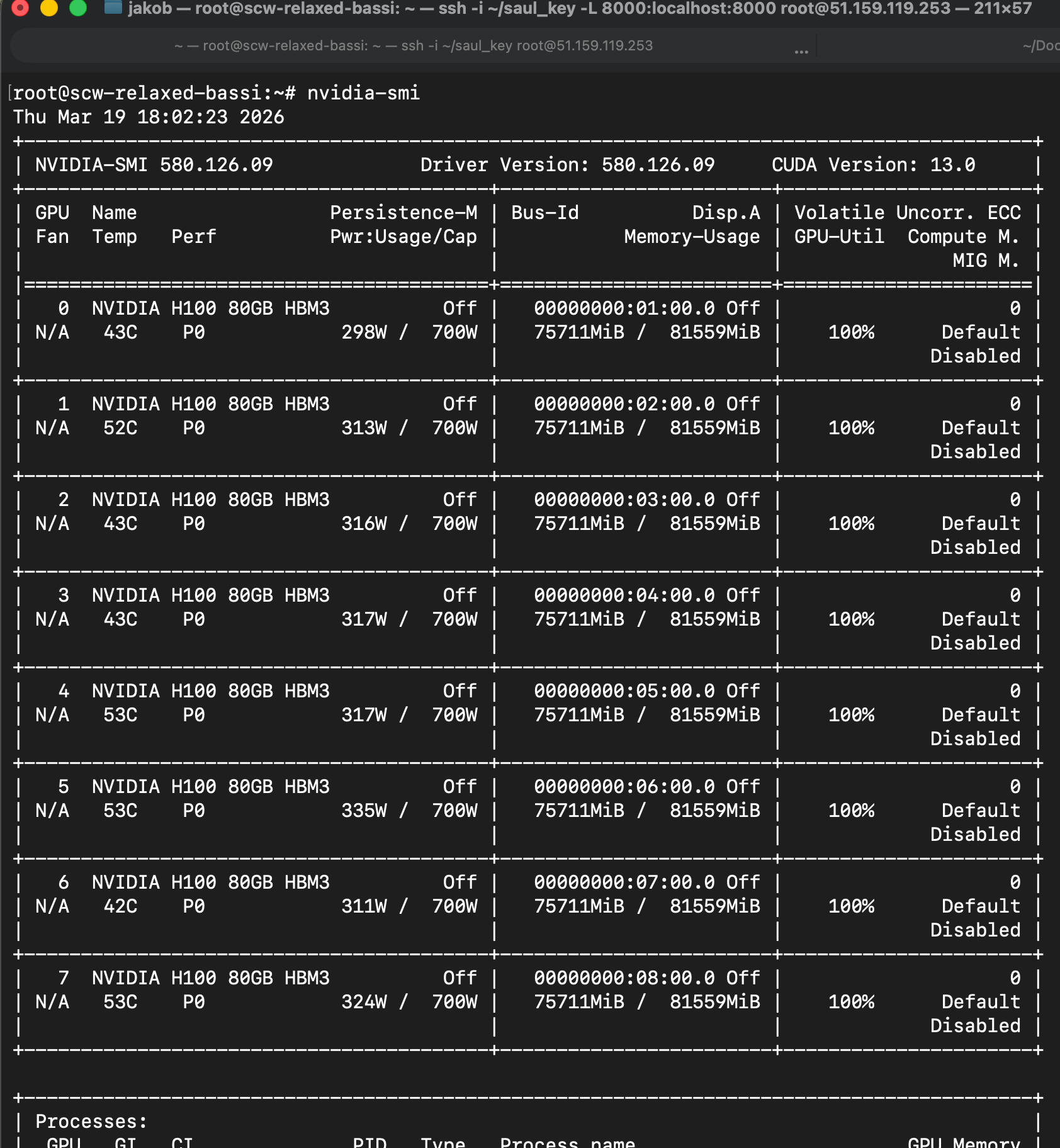

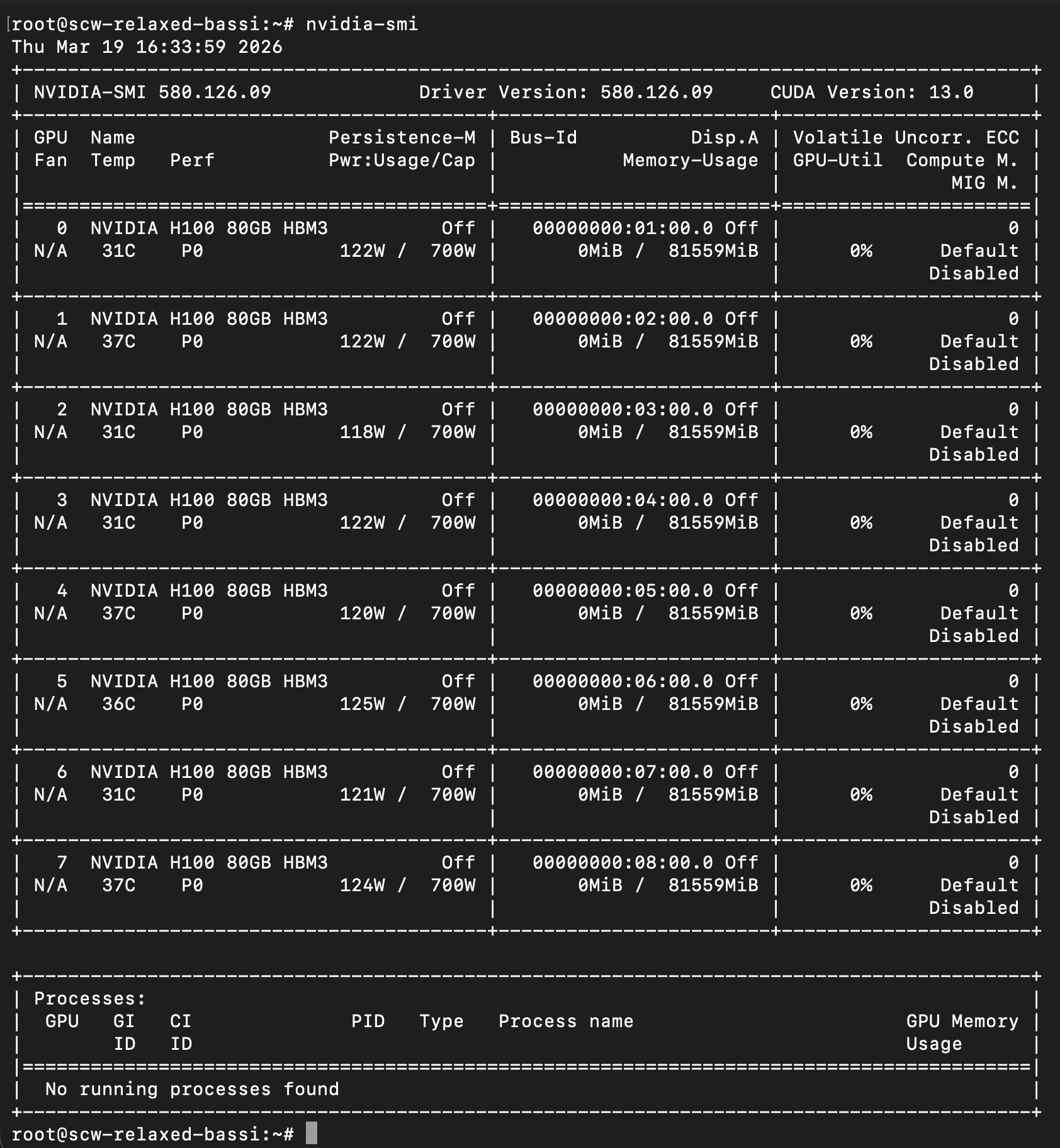

The previous experiment ran on a single Nvidia H100. This time, the plan was to test SaulLM-141B – twenty times the size of the laptop model – which does not fit on a single GPU. So what is better than one Nvidia H100? The answer, naturally, is eight Nvidia H100s.

A Scaleway GPU instance with that kind of quota required a short phone call with Paris to request approval – and a promise not to block the machine too long. At €23 per hour, one might assume they would have every incentive to let you keep it running, but apparently not.

Request granted: eight H100 SXMs, eighty gigabytes of VRAM each, 640 GB total, connected via NVLink – a setup that could comfortably run most open-source models in existence, with one notable exception.

With the clock ticking, the first order of business was downloading 1.3 terabytes of model weights from Hugging Face – 35 minutes of eight GPUs sitting completely idle. The inference server of choice was vLLM with tensor parallelism: the model’s weights split across all eight GPUs so they work together as one, which is what makes 140-billion-parameter models possible on hardware with 80 GB per GPU.

The pipeline code is identical to the laptop setup – same prompts, same routing logic – with the model endpoint swapped from a local Ollama server to the remote vLLM instance. No third-party API, no data shared externally.

Three models, ran sequentially:

EuroLLM-22B – yes, the EU funded the training of its own language model. Trained on all 24 official EU languages as part of the EuroLLM project, a consortium of European research institutions. This is the institutional candidate: the EU’s model reading the EU’s regulations.

SaulLM-141B – the largest in the SaulLM family. The 7B ran on the laptop, the 54B ran in the previous experiment, this one weighs in at 141 billion parameters. Same legal training data, twenty times the size of the MacBook model. The expectation was straightforward: bigger model, same specialisation, better results.

And then there was DeepSeek-R1.

642 GB into 640 GB

The original plan included DeepSeek-R1 – the full 671-billion-parameter reasoning model from the Chinese AI lab DeepSeek. At FP8 quantisation, its weights alone require approximately 642 GB of VRAM.

The cluster has 640 GB.

Three attempts were made. Each produced an out-of-memory crash at the model-loading stage – before a single token of regulation was read. The weights themselves filled the GPUs to 98.7% capacity with zero bytes left for anything else. The model physically did not fit.

The pivot was DeepSeek-R1-Distill-Llama-70B – the largest distilled reasoning variant. Distillation works like this: DeepSeek ran their full 671B model on a large set of problems, collected all the step-by-step reasoning traces it produced, and then used those traces as training data to teach a smaller model to reason the same way (paper). The “student” in this case is Meta’s Llama 3 70B – an American base model that learned Chinese-style chain-of-thought reasoning. At ~140 GB, it loaded with room to spare.

Why include a reasoning model at all? Most classification tasks do not require chain-of-thought. You read the text, you assign a label. But EU regulation is not always straightforward – a regulation about carbon border adjustments touches “Energy”, “Trade”, “Environment”, and possibly “Industry” depending on how you read it. A model that thinks through the ambiguity before committing to an answer might handle those multi-domain cases better than one that just pattern-matches. This model produces visible <think> tokens – you can literally watch it reason, step by step, before it gives its final classification.

All three GPU models finished in about half an hour each.

Eight H100s before and after being put to work.

EuroLLM-22B: 30.7 minutes, zero errors across 1,780 prompts. The EU’s own model read 890 pieces of EU legislation and concluded that virtually nothing of consequence had happened – 79% ROUTINE, and exactly one regulation flagged as HIGH impact. A model of institutional composure, if nothing else.

SaulLM-141B: ~34 minutes, and 51 parse errors – nearly 6% of documents where the model returned something other than valid JSON. This is one of the less charming features of large language models: even when the prompt says “respond in JSON format”, a verbose model can wander off-format, returning explanatory paragraphs where a JSON object was expected, wrapping JSON in markdown code fences the parser does not anticipate, or simply deciding mid-response that a structured answer would benefit from a preamble. The biggest model in the experiment had the worst discipline.

DeepSeek-R1-Distill-70B: ~31 minutes, one parse error. Sixteen HIGH, 43% MODERATE, 56% ROUTINE – the most balanced distribution of the four, and the only model that looked like it had actually thought about the question before answering. Which, given that it is a reasoning model, is presumably the point.

Those impact numbers – one model flagging 30 regulations as HIGH, another flagging one – deserve a closer look.

The Impact Question

EuroVoc does not tag regulations as “high-impact” or “routine”. There is no ground truth for this question – we can only compare the models to each other. And they do not agree.

| Model | Routine | Moderate | High |

|---|---|---|---|

| SaulLM-7B | 109 (12%) | 735 (83%) | 30 (3%) |

| EuroLLM-22B | 702 (79%) | 187 (21%) | 1 (0.1%) |

| SaulLM-141B | 633 (71%) | 211 (24%) | 15 (2%) |

| DeepSeek-R1 70B | 496 (56%) | 378 (43%) | 16 (2%) |

| Rows not summing to 100% reflect parse errors – regulations where the model failed to return valid output. | |||

Same 890 regulations, same prompt. One model flags 30 as high-impact. Another flags one.

SaulLM-7B sees danger everywhere. EuroLLM sees almost none. A regulatory monitoring system built on any single model’s impact assessment would either drown in false alarms or miss things that matter, depending entirely on which model you picked.

What does this look like in practice? A compliance team using SaulLM-7B as their regulatory radar would receive 30 high-priority alerts from six months of legislation – roughly one per week. The same team using EuroLLM would receive one. Total. In six months. Both systems would report high confidence in every single assessment. Neither would mention that the other one exists.

There is an irony here worth savouring: the smallest model in the experiment – the one that performed worst on every other metric, as we will see shortly – is also the most alarmed. The model that understands EU regulation least is the one most convinced it is all terribly important.

But none of this tells us which regulations are actually high-impact. It tells us that pointing a language model at a document and asking “does this matter?” produces an answer that says more about the model than about the document. Which raises a more fundamental question: are these models actually any good at reading regulation in the first place?

Checking the Answer Key

There is no official ranking of which EU regulations matter most, so the impact question cannot be graded directly. But there is a reasonable proxy: if a model cannot correctly identify what a regulation is about, it probably should not be trusted to tell you how important it is.

And for domain classification, we do have an answer key – the librarians at the Publications Office have been tagging these regulations for thirty years. Their domain assignments are the closest thing to a ground truth we have – not perfect, since they tag at the granular level and we map to the 21 top-level domains, but structured, consistent, and considerably better than guessing.

The overlap between a model’s assignments and the librarians’ gives us a score: 1.0 would mean perfect agreement. 0.0 would mean the model might as well have picked domains at random.

| Model | Params | Hardware | Runtime | Overlap |

|---|---|---|---|---|

| SaulLM-7B | 7B | MacBook Air M3 | 940 min | 0.33 |

| EuroLLM-22B | 22B | H100 ×8 | 31 min | 0.47 |

| SaulLM-141B | 141B | H100 ×8 | ~34 min | 0.50 |

| DeepSeek-R1-70B | 70B | H100 ×8 | ~31 min | 0.56 |

Overlap with thirty years of librarian classifications. 1.0 = perfect match. Nobody got close. DeepSeek won – with half the parameters and no legal training data.

The reasoning model wins. What does that mean concretely? When DeepSeek assigns domains to a regulation, those domains overlap with the human librarians’ assignments 56% of the time. SaulLM-141B – twice the size, trained specifically on legal text – manages 50%. The model that pauses and thinks before answering beats the model with more parameters and more legal training data.

The size ordering would predict: 141B > 70B > 22B > 7B. The actual ordering is: 70B > 141B > 22B > 7B. More parameters did not compensate for not thinking.

But 0.56 is not exactly a ringing endorsement. If the best model assigns five domains to a regulation, roughly two or three of them will match the librarians’ choices – and the rest won’t. Good enough to tell you what a regulation is broadly about. Not good enough to replace the person who reads it.

Domain-level overlap with human classifications

| Domain | Docs | SaulLM-7B | EuroLLM-22B | SaulLM-141B | DeepSeek-R1-70B |

|---|---|---|---|---|---|

| Agriculture | 250 | 34% | 80% | 83% | 84% |

| International Relations | 300 | 57% | 78% | 80% | 79% |

| Agri-Foodstuffs | 198 | 18% | 71% | 73% | 76% |

| Geography | 457 | 9% | 8% | 33% | 75% |

| European Union | 413 | 67% | 60% | 71% | 65% |

| Environment | 176 | 40% | 70% | 66% | 70% |

| Finance | 96 | 22% | 56% | 51% | 67% |

| Employment | 34 | 4% | 38% | 38% | 67% |

| Transport | 90 | 31% | 56% | 63% | 60% |

| Energy | 29 | 10% | 17% | 39% | 56% |

| Industry | 161 | 24% | 51% | 54% | 54% |

| Trade | 442 | 45% | 51% | 51% | 47% |

| Business & Competition | 113 | 10% | 46% | – | 32% |

| Law | 196 | 34% | 29% | 46% | 40% |

| Social Questions | 142 | 19% | 23% | 26% | 45% |

| Production & Research | 127 | 27% | 9% | 33% | 20% |

| Economics | 59 | 12% | 29% | 16% | 20% |

| Education | 151 | 28% | 8% | 7% | 21% |

| Politics | 86 | 16% | 14% | 24% | 25% |

| International Organisations | 24 | 4% | 13% | 15% | 17% |

| Science | 24 | 12% | 8% | 8% | 15% |

Darker green = closer to the librarians. “Agriculture” and “International Relations” are easy. “Science” and “Education” are near-invisible to all four models.

Where They Agree

Here is the number that sounds worst: across all 890 regulations, not a single one was classified with the exact same set of domains by all four models. Zero. None.

That sounds like total disagreement, but it is not. A regulation can belong to multiple domains at once – a regulation on agricultural trade subsidies might correctly belong to “Agriculture”, “Trade”, and “Economics” simultaneously. Each model picks its own combination of 2 to 6 domains. With 21 possible domains, the chances of four models independently landing on the exact same combination are vanishingly small. But that does not mean they disagree on what the regulation is about.

On 57% of regulations, all four models agreed on at least one domain – they all identified the same core subject. On 98%, at least three out of four found common ground. The disagreement is about breadth, not about core subjects. A sanctions regulation gets tagged “International Relations” by everyone. The debate is whether it also gets “Trade”, “Energy”, “Politics”, or all three.

Which brings us to the finding the experiment was built to test: on the documents where models substantially agreed, accuracy against the human ground truth was 29% higher than on the documents where they diverged. When all four models converge on a domain, that domain tends to match what the librarians assigned. When they split, the classifications drift.

In other words: multi-model agreement is a better predictor of correctness than any single model’s confidence score. And when the models don’t agree, that is exactly where you want a human looking at the document.

Which brings us back to impact classification – the question with no answer key. If agreement predicts accuracy on domains, it stands to reason it does the same for impact. A regulation that all four models independently flag as HIGH is worth reading. A regulation where SaulLM-7B says HIGH and the other three say ROUTINE is probably SaulLM-7B being SaulLM-7B. Not proof – but considerably better than trusting whichever model you happened to install.

What This Suggests

No single model is worth listening to alone. But when all four converge, accuracy against the human baseline jumps by 29%. Wisdom of crowds, applied to language models – independent errors cancel out, shared signal reinforces.

Reasoning beats size. A 70B model with chain-of-thought outperformed a 141B legal specialist on every metric. The model that thinks before answering gives better answers. More parameters did not compensate for not thinking.

Confidence is decorative. All four models reported confidence above 0.85 on virtually every classification – including the wrong ones. Self-reported confidence measures rhetorical commitment, not correctness. The previous experiment found the same thing.

A human in the loop is not optional. The best model overlapped with the human baseline just over half the time. Put differently: if you handed someone a stack of 890 domain classifications and said “the model did these”, roughly 44% of them would not match what the professional librarians assigned. A system that presents a single model’s output as authoritative will be wrong nearly as often as it is right – and confident about it every time.

Local hardware is not ready. A 7B model on a MacBook takes sixteen hours and produces the worst results by a wide margin. The trajectory suggests a consumer laptop before 2030 could do meaningfully better. Whether it will be fast enough for real-time use remains to be seen.

So What

EU regulation is an almost unreasonably good test case for language model classification. Clean documents, public ground truth, stable taxonomy, high volume. If LLMs are going to work anywhere in regulatory monitoring, they should work here. Under those near-ideal conditions, the best model agreed with the human baseline just over half the time.

Replacement is the wrong frame. The more useful question is whether LLM output, aggregated across multiple models, can tell you where to look. A regulation that all four models agree on probably does not need a human to review. A regulation where they split 2–2 probably does.

Four models processed five million words of regulation – the three on rented GPUs in under two hours, the one on a laptop overnight. Their disagreements are structured enough to be useful – if you build around them rather than ignoring them.

Which, to be fair, is also true of most bureaucrats.